Real User Monitoring: How It Works, Why It Matters and How It Helps Improve Digital Experiences

Digital performance has become a defining factor in how financial services organizations compete, retain customers and protect brand trust. Whether users are checking account balances, applying for loans, submitting insurance claims or executing trades, expectations are high and tolerance for friction is low. Slow load times, broken pages or unresponsive interactions don’t just create frustration. They can directly impact conversion rates, regulatory risk and long-term customer loyalty.

This growing reliance on digital channels has increased the need for better visibility into real user experiences. Traditional analytics and infrastructure monitoring tools provide useful data, but they often stop short of explaining how performance issues actually affect customers in the moment. That gap is where real user monitoring (RUM) plays a critical role.

Real user monitoring captures performance and behavioral data directly from real users as they interact with websites and applications. Instead of relying on simulated tests or aggregated metrics, RUM shows what actual users experience across devices, browsers and network conditions. It reveals how performance impacts the user journey in real time and where friction occurs.

By leveraging real user monitoring data, organizations can improve website performance, optimize Core Web Vitals, identify frontend performance issues and make informed decisions that enhance user satisfaction. This guide explains what RUM is, how it works, how it compares to synthetic monitoring and why it is essential for financial services teams focused on digital experience monitoring and application performance.

What is Real User Monitoring?

Real user monitoring is a performance monitoring approach that measures how real users experience a website or application. Unlike traditional web analytics platforms that focus on traffic volume, conversion rates and attribution, RUM focuses on experience quality.

A RUM tool tracks metrics as they occur for actual users, such as:

Page load time

Response time

Core Web Vitals

JavaScript errors

Navigation events

This data is collected passively in the background and reflects real-world conditions rather than controlled test environments.

The distinction between RUM and traditional analytics is important: Analytics tools measure what users do, while RUM measures how well the experience performs while users do it. In financial services, this difference is especially meaningful. A user may technically complete a transaction, but if the experience was slow, confusing or error-prone, trust may already be eroded. Analytics alone rarely capture that nuance.

RUM provides visibility into performance issues that directly affect real users. It surfaces slow pages, broken interactions and errors that may only occur under specific conditions, such as on certain devices or network types. This visibility helps teams understand why users abandon sessions, retry actions or repeatedly click the same element.

Understanding frustration is a key advantage of RUM. Repeated errors, form failures or delayed responses often indicate deeper experience problems. Tools like struggle error analysis help teams identify these moments and prioritize fixes that reduce friction and improve user satisfaction.

How Real User Monitoring Works

At its core, RUM works by observing digital experiences as they actually unfold. Rather than relying on simulated tests or historical summaries, RUM captures performance and behavior data in real time as users interact with websites and applications. This approach provides a direct view into how systems perform under real-world conditions, across real devices and networks.

Instrumentation and Data Capture

RUM begins with lightweight instrumentation embedded directly into the frontend of a website or application. This typically takes the form of browser-based JavaScript tags for web experiences or SDKs for mobile applications. These components run quietly in the background, collecting performance and interaction data without interrupting the user session or affecting application behavior.

As users navigate pages, submit forms or trigger application events, the instrumentation records how the experience responds. This includes both technical performance signals and user interaction events, allowing teams to understand not only what happened but how it affected the user in that moment.

Key data captured includes:

Page load time and the sequence in which page elements load

Response time for network and application programming interface (API) requests

Core Web Vitals metrics, including largest contentful paint and cumulative layout shift

JavaScript errors, failed requests and frontend exceptions

Navigation events, route changes and session flow

Together, these performance metrics and interaction signals form the foundation of RUM data.

Contextualising Real User Monitoring Data

Raw performance metrics alone rarely tell the full story. To make RUM data actionable, it is enriched with contextual information about the user and their environment. This additional layer of detail helps teams understand why performance issues occur and who is affected.

Common contextual attributes include:

Device type and screen size

Browser and browser version

Operating system

Geographic location

Network conditions, such as connection type or latency

With this context, teams can isolate performance bottlenecks that affect specific user segments. For example, an issue may only impact mobile users on slower networks or customers accessing the application from a particular region. Without RUM, these patterns are often difficult to detect.

Passive Monitoring and Real-World Variability

Unlike synthetic monitoring, RUM is passive by design. It does not generate traffic or simulate behavior. Instead, it observes what real users experience as they interact with the application in their natural environment.

This passive approach is especially valuable for capturing real-world variability. Users access financial services applications from a wide range of devices, browsers and network conditions. RUM reflects that diversity, surfacing edge cases and intermittent issues that scripted tests may never encounter.

Aggregation, Analysis and Visualization

Once collected, RUM data is aggregated and analyzed within centralized dashboards. These views surface trends, anomalies and performance issues across pages, sessions and user journeys. Teams can filter and segment data by attributes such as geography, device type or application version to quickly narrow in on root causes.

Advanced RUM platforms go beyond charts and tables by providing visual tools that help interpret user behavior. Features such as interaction and heatmaps show where users click, scroll or pause on a page. This behavioral context adds depth to performance data, making it easier to see how slow load times, layout shifts or errors disrupt the user journey.

By connecting performance metrics with actual user behavior, RUM transforms technical data into experience-level insight. Teams gain a clearer understanding of not just where problems occur, but how those problems affect users in real time.

Real User Monitoring vs Synthetic Monitoring

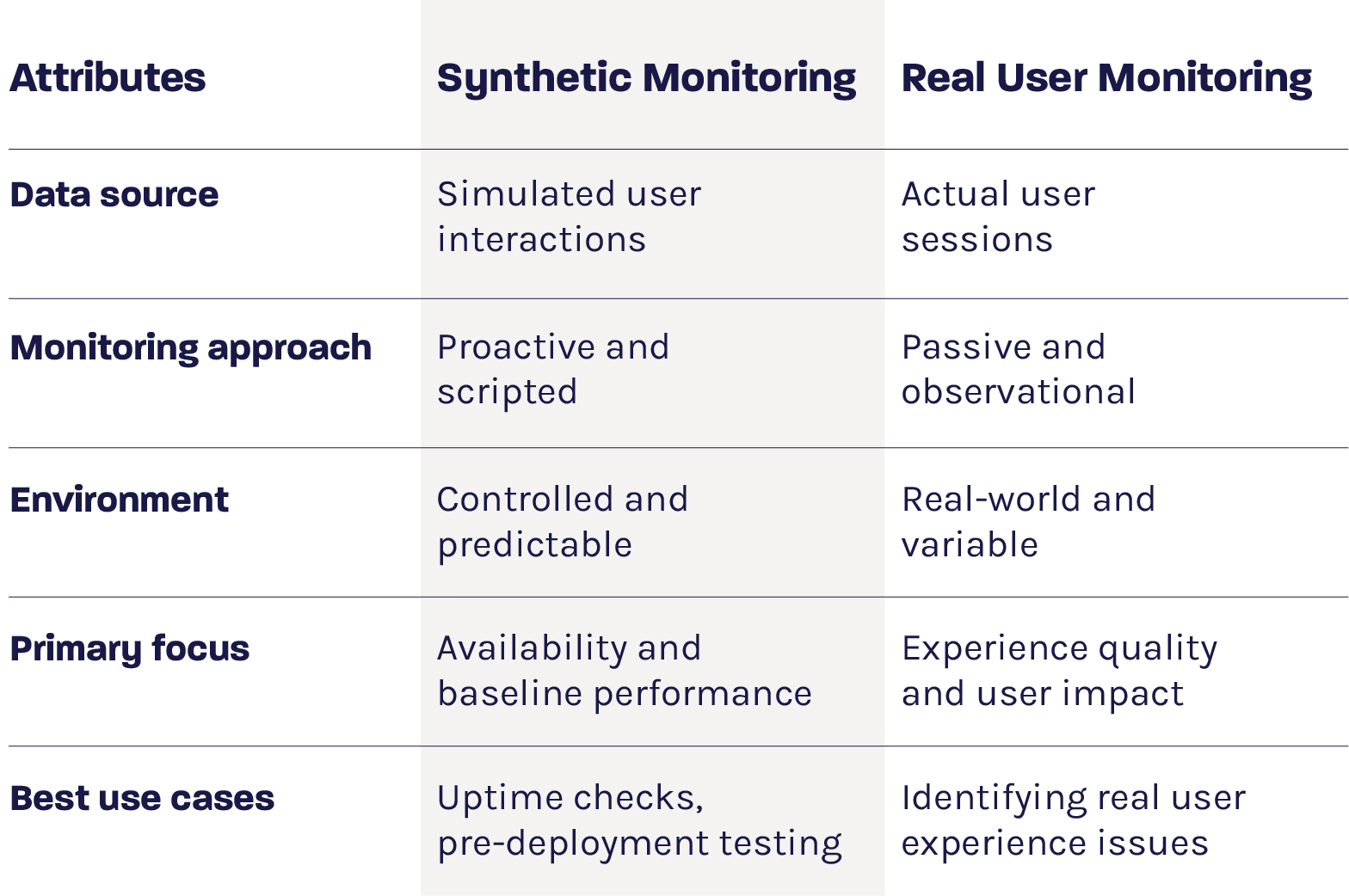

RUM and synthetic monitoring are often discussed together because they address the same goal: understanding and improving digital performance. However, they approach that goal from very different angles. Each method provides a distinct type of visibility, and understanding how they differ is essential for building an effective performance monitoring strategy.

Synthetic monitoring relies on scripted tests that simulate user actions, such as loading a page, logging in or completing a transaction. These tests run at scheduled intervals from predefined locations and environments, making them highly controlled and repeatable. As a result, synthetic user monitoring is well-suited for proactive checks, availability monitoring and establishing baseline performance expectations.

RUM takes the opposite approach. Instead of simulating behavior, it captures what actually happens when real users interact with an application. It reflects real devices, browsers, network conditions and usage patterns, providing insight into how performance impacts the user experience in real-world scenarios.

Real User Monitoring vs Synthetic Monitoring

RUM excels at uncovering issues that synthetic monitoring cannot detect. These include performance degradation affecting specific regions, frontend errors triggered by unique user behavior or problems caused by third-party services that only appear under real conditions.

At the same time, RUM alone cannot anticipate issues before users encounter them. Synthetic monitoring helps fill that gap by identifying failures early. When used together, the two approaches provide complementary insights, giving teams both proactive oversight and real-world validation of performance.

Core Benefits of RUM for Digital Experience

RUM delivers strategic value across technical and business teams. For financial services organizations, the benefits extend beyond performance troubleshooting to include:

Identifying performance bottlenecks: RUM helps teams identify frontend performance bottlenecks that infrastructure monitoring may miss. Slow scripts, inefficient rendering and third-party dependencies often impact load time and interaction responsiveness.

Improving Core Web Vitals: These key indicators of experience quality include metrics such as largest contentful paint and cumulative layout shift that influence both user satisfaction and search performance. RUM shows how these metrics perform for real users, enabling targeted optimization.

Optimizing the user journey: By tracking navigation patterns and user interactions, RUM reveals friction points across critical flows. This insight helps teams streamline onboarding, authentication and transaction processes.

Supporting data-driven decisions: RUM provides a shared source of truth for engineering, UX and product teams. Decisions are based on real performance data rather than assumptions, improving alignment and prioritization.

Detecting issues that synthetic monitoring misses: Some performance issues only appear under specific real-world conditions. RUM captures these scenarios, offering valuable insights that proactive tests may overlook.

Together, these benefits make RUM a foundational element of effective web application monitoring. By connecting performance metrics with real user behavior, RUM helps teams move beyond isolated technical signals to understand how application performance impacts customer experience end to end. This broader visibility enables financial services organizations to prioritize improvements that protect trust, reduce friction and support long-term digital performance.

Key Metrics Tracked by Real User Monitoring

To fully understand digital experience quality, RUM tools track a wide range of metrics that capture an application’s performance and user interaction. By analyzing these measurements together, teams can identify performance bottlenecks, detect errors that impact users and uncover behavioral patterns that influence the overall user journey. The key metrics RUM tracks can be grouped into three main categories.

Performance Metrics

These metrics focus on how fast and responsive an application is, helping teams evaluate frontend performance and pinpoint slow or unstable experiences. Common performance metrics include:

Page load time

Response time

Time to first byte

First contentful paint

Largest contentful paint

Cumulative layout shift

Error Tracking Metrics

Error metrics reveal where technical failures occur and how they affect real users. Monitoring these issues helps teams connect system errors with their impact on the user experience. Key error tracking metrics include:

JavaScript errors

Failed API calls

Network timeouts

Error frequency by session

User Behavior Metric

Understanding what users do and how they navigate an application is essential for identifying friction points and optimizing the user journey. RUM tracks behavior metrics such as:

Clicks and taps

Scrolling patterns

Navigation paths

Session abandonment

By combining performance, error and user behavior data, organizations gain a holistic view of digital experience. This integrated perspective allows teams to prioritize fixes based on real user impact, optimize flows that matter most and continuously improve application performance.

Real User Monitoring in Action

RUM becomes most powerful when applied to concrete challenges. By capturing actual user interactions, RUM provides insights that go beyond generic performance metrics, revealing where friction, errors or bottlenecks directly impact the user experience. This real-world visibility allows teams to take targeted actions that improve both technical performance and customer satisfaction. Some of the ways RUM data is leveraged include:

Diagnosing frontend regressions: After a release, RUM can reveal performance regressions that affect page load time or responsiveness. Teams can correlate issues with deployments and address them quickly.

Optimizing mobile app experiences: Mobile users experience greater variability in network conditions. RUM highlights how performance differs across devices and connections, helping reduce churn.

Enhancing customer journeys: RUM data shows where users struggle during key flows. Tools like struggle error analysis help teams identify and fix issues that disrupt completion.

Supporting fraud and security initiatives: Unexpected behavior patterns may also indicate risk. When combined with behavioral analytics, RUM can support efforts such as fraud monitoring.

By turning these insights into actionable improvements, teams can move from observing problems to proactively enhancing digital experiences.

Operationalizing Real User Monitoring Insights

Collecting RUM data is only valuable if insights lead to action. Successful organizations operationalize RUM by:

Embedding dashboards into engineering and UX workflows

Prioritizing fixes based on user impact and frequency

Running iterative optimization cycles

Combining RUM with synthetic monitoring and session replay

This approach shifts performance monitoring from reactive troubleshooting to continuous improvement.

Maximizing Digital Performance with Glassbox and Real User Monitoring

Selecting the right real user monitoring platform is critical, especially for financial services organizations managing complex digital ecosystems.

Glassbox combines RUM with advanced behavioral analytics to provide deep visibility into user experience. Features like struggle error analysis, interaction and heatmaps help teams transform RUM data into actionable insights that improve core web vitals, frontend performance and user satisfaction.

Session replay extends this visibility by allowing teams to observe actual user sessions. By seeing exactly how users interact with applications, teams can uncover the root causes of performance issues and identify opportunities for ongoing optimization.

By integrating Glassbox RUM and session replay, organizations gain full visibility across the digital journey. This enables proactive issue resolution, continuous performance improvement and more reliable digital experiences that build trust and drive long-term value.